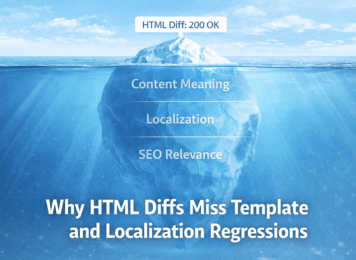

A page can stay live, return 200 OK, and still lose the parts that matter most. That is why silent regressions keep slipping through on multilingual, template-driven, JavaScript-heavy sites. Raw HTML diffs are loud, but they rarely reveal whether the page meaning actually changed. For SaaS and eCommerce teams, the real risk is not that markup changed. It is that a key block, language variant, or rendered element changed in a way that weakens SEO, trust, or conversion while monitoring still says everything is fine.

Why HTML Diffs Miss Template and Localization Regressions

Nadiia Sidenko

2026-04-20

Why HTML Diffs Often Lie

HTML diffs are good at spotting movement, but poor at judging impact. On modern websites, they highlight code motion while missing the changes that actually damage search visibility, message clarity, or conversion.

Template Noise vs Real SEO Regressions

Modern pages are in constant motion. A template update can reorder wrappers, rename classes, inject scripts, or reshuffle non-critical markup without changing what the page says. A raw diff sees movement everywhere and overstates the risk. In practice, much of that movement has little or no SEO value.

That creates a bad habit: teams start reacting to technical motion instead of business impact. This is the same pattern behind alert fatigue. When alerts are tied to noisy markup churn, real regressions become easier to ignore.

Markup Churn and False SEO Alerts

A/B tests, personalization layers, third-party widgets, and frontend deployments can rewrite large parts of the HTML while leaving page intent untouched. The reverse is often more dangerous: the code can look stable while the rendered page quietly loses a CTA, pricing detail, policy line, or localized heading.

That is why HTML movement alone is a weak signal. It tells you that something moved, not whether the page became less relevant, less trustworthy, or less likely to convert.

What Actually Breaks SEO

The most expensive regressions rarely look dramatic at first. Monitoring stays green, the page returns 200 OK, and the release appears clean, yet relevance, clarity, and conversion support have already started to slip. That is exactly why these failures are costly: they look operationally safe while quietly weakening what search engines and users actually evaluate.

Localization Mismatches in Multilingual SEO

Localization problems rarely show up as outages. A template gets translated while the main block stays in the wrong language. A regional page points to the wrong variant. A fallback page starts absorbing traffic that should land on a more relevant version. Google recommends clearly mapping localized versions of a page, while W3C also stresses proper language declaration in HTML.

These issues matter because multilingual SEO is not built on uptime. It is built on alignment. When language signals, page variants, and visible content stop matching, the page can remain fully reachable while becoming less trustworthy, less relevant, and harder for search engines to interpret correctly.

Critical Content Loss on High-Value Pages

Some regressions are small enough to escape release reviews but large enough to hurt revenue. A product page loses its pricing block. A landing page drops the section that explains why the offer matters. A category page keeps the shell but loses the text that supports relevance. A service page keeps its title, yet the body copy no longer carries the intent.

This is where SEO monitoring signals matter more than uptime alone. A page can stay technically reachable while losing the exact content that helps it rank, reassure, and convert.

Content Diff vs HTML Diff

This is where most teams misread the signal. Raw HTML diffs make change look obvious, but they do a poor job of showing whether the page still communicates the right meaning. That gap between visible code movement and actual page quality is where silent SEO regressions tend to hide.

Rendered Content Changes vs Technical HTML Changes

Search engines do not treat every piece of raw markup equally. On JavaScript-heavy sites, Google works from the rendered page state, not just the first response. Its guidance on rendered HTML makes the core point clear: what matters is often the final output, not the first code snapshot.

A better question is not “Did the HTML change?” but “Did the page still preserve its meaning?” That includes missing headings, broken internal links, removed copy, weakened trust sections, lost structured blocks, and visible content shifts across language or market versions. Competitors often stop at change detection. The harder and more useful question is whether the page still supports search intent once the release is live.

What Matters More Than Raw HTML Diffs

The strongest monitoring signals are tied to page purpose: critical copy, navigation paths, trust elements, pricing, and the core sections that make the page relevant for search intent. On some dynamic pages, a quick check of structured data with JavaScript can still be useful, but only as a supporting signal when visible content and markup drift apart.

| Scenario | What raw HTML diff shows | What actually changed | SEO / business risk | Better signal |

|---|---|---|---|---|

| Template refactor | Many markup changes | Same page meaning | High alert noise | Critical section checks |

| Language rollout | Small text changes | Wrong language on key block | Weaker relevance, trust loss | Per-language content checks |

| CTA removed | Minor block deletion | Conversion path broken | Lost leads or sales | CTA presence monitoring |

| JS render issue | Little in source HTML | Missing visible content | Thin or incomplete page state | Rendered-content checks |

A useful monitoring setup focuses on the last three columns, not the first one.

Where Silent Regressions Begin

Most silent regressions do not begin with an outage, a red dashboard, or a dramatic post-release failure. They begin inside ordinary release cycles, shared components, and multilingual updates that look harmless in QA. The damage shows up later, when rankings soften, trust signals weaken, or conversion paths start to blur.

SEO Risks from Template and CMS Updates

Silent regressions often begin inside ordinary releases. A CMS update changes a shared component. A frontend deployment alters rendering order. A reusable block disappears across a whole set of pages. Google’s guidance on rendering issues in JavaScript sites shows how easily rendered output, routing, indexing behavior, and error handling can drift away from what teams expect.

This is also why content regressions after deploys are easy to miss. The release passes. The page stays online. Monitoring looks calm. Only later do teams notice weaker rankings, softer engagement, or lower-quality leads and realize the page meaning changed long before the dashboard admitted it.

Language Rollout Gaps and SEO Drift

Regional launches create another weak point because speed usually beats precision. Teams reuse templates, ship partial translations, and push market edits fast to keep launches moving. That may be efficient operationally, but it is risky for SEO when critical text, internal linking, or page intent stops matching the target version.

These gaps are especially common on sites with shared layouts across regions, where the template stays familiar while the real SEO work depends on a handful of localized details being exactly right.

What Better SEO Monitoring Looks Like

The strongest monitoring setups do not react to every deployment artifact. They protect the elements most likely to drift on multilingual, template-driven sites: rendered headings, localized copy, CTA blocks, pricing, and internal links.

Monitor Rendered Content, Not HTML Noise

On these sites, the most useful checks sit closer to search intent than to source code changes. They verify whether the rendered page still carries the sections that make it relevant in each market version, not whether the markup tree changed. A template update that rewrites containers is not the same risk as a page that quietly loses its value proposition, internal links, or localized headings.

Alert on Business-Critical SEO Changes

The right alert is not “page changed.” It is “the German service page lost its primary heading” or “the pricing block disappeared.” That is the difference between noisy monitoring and SEO regression monitoring. On multilingual, template-driven sites, useful alerts name the loss clearly enough to show what broke and where.

Conclusion

HTML diff is a blunt instrument. It catches motion, not meaning. On multilingual and template-driven sites, the losses that matter usually come from drift between templates, rendered content, and localized page intent, not from the fact that the source code changed.

The teams that catch these problems earlier do not monitor every markup fluctuation. They monitor whether high-value pages still preserve the copy, structure, language signals, and business-critical elements that make them useful in search. That is what helps them catch silent regressions before the losses show up in rankings, erode trust, or hit conversions.

Why HTML Diffs Often Lie

What Actually Breaks SEO

Content Diff vs HTML Diff

Where Silent Regressions Begin

What Better SEO Monitoring Looks Like

Conclusion